Content Traffic is Vanity. Training Data is the Moat.

TL;DR

I was cleaning up abandoned experiments on tabiji.ai and noticed our dormant public API is getting 4,667 requests a week — 83% of it Meta’s crawler. Flattering, but the wrong metric. AI web search (what the crawler is doing) only patches gaps in a model’s training data — yesterday’s pricing, this week’s news. Training data itself is where brand presence compounds, and frontier training runs cost hundreds of millions of dollars and refresh every 6–18 months. So I uploaded tabiji’s whole dataset — 8,799 records, 11.2 MB, Parquet, CC-BY-4.0 — to Hugging Face, where OpenAI, Anthropic, Meta, and Mistral source their training corpora. One upload, permanent citation, zero ongoing bandwidth. Full play below.

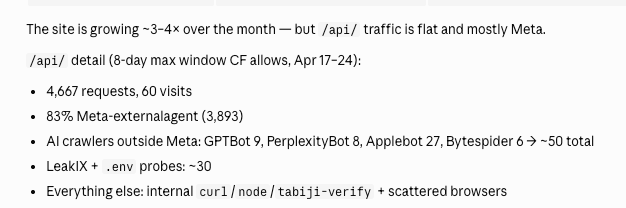

I was going through old tabiji.ai experiments last week, decluttering. The public /api/ endpoint was in there — an afterthought we shipped early, barely marketed, mostly forgot about. Before I killed it, I pulled up the Cloudflare dashboard to see what, if anything, it was doing.

/api/ endpoint. The humans are the noise. The bots are the signal.4,667 requests over 8 days. 60 human visits. 83% of the non-visit traffic is Meta’s external crawler. GPTBot, PerplexityBot, Applebot, Bytespider, and the long tail of AI crawlers account for roughly another 50. The rest is LeakIX / .env probes, internal curl, and scattered browsers. The API we forgot about was, quietly, an LLM-feeding endpoint.

My first reaction was to feel flattered. My second reaction was to ask whether “the LLM crawlers are reading my API” is actually the metric I want to be chasing.

It’s not.

Two layers: web search vs. training data

The AEO post I wrote a couple weeks back broke down the three layers of an AI response — training data, validation search, and memory. What the Meta crawler hitting my API represents is the validation layer: agents fetching fresh data at query time to fill gaps in their training corpus. “Is tabiji’s pricing still current? Did any advisories change this week?” That’s useful, and the brand can show up in the answer for that specific query. But it’s single-turn impact — the model isn’t learning anything about tabiji from one JSON response during inference.

The training-data layer is the one that compounds. Content that makes it into the corpus gets baked into the model weights. Every query against that model — for the next year or so, until the next refresh — has some probability of surfacing your entity, your framing, your voice. You don’t have to be fetched again. You’re already in there.

Why training data is a moat

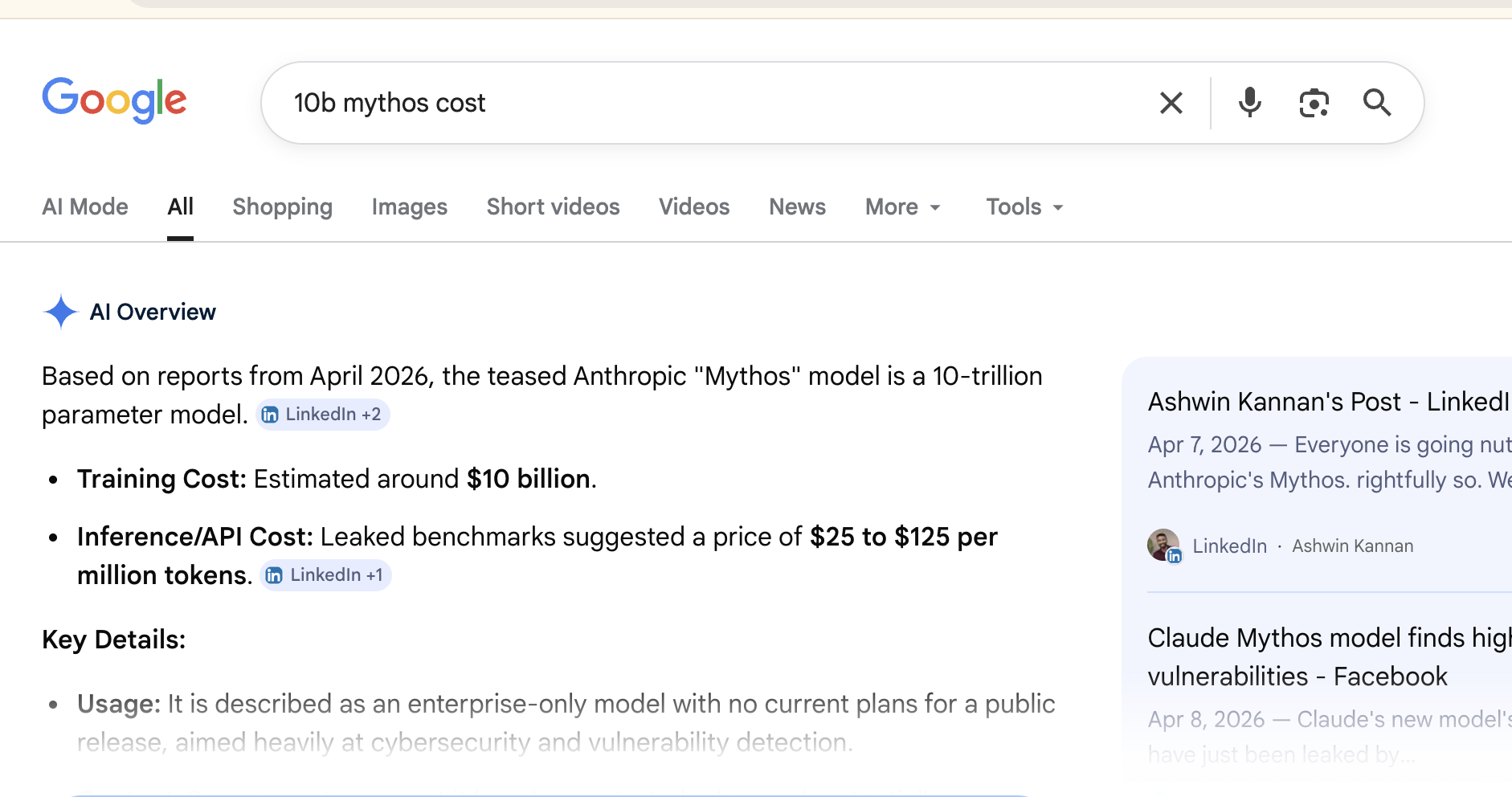

Training runs are expensive and infrequent. Epoch AI pegs GPT-4.5’s training cost around $340 million and Grok 4’s around $390 million. The widely-circulated claim that Anthropic’s “Mythos” cost $10 billion traces back to a single tweet, not to Anthropic — the real number’s murkier, but every credible estimate puts frontier runs in the hundreds of millions, minimum.

What that cost means for you: training corpora don’t get refreshed casually. Data cutoffs run 6–18 months behind model release dates. Whatever made it into the corpus is riding a very long wave. Whatever didn’t is waiting at least another refresh cycle, usually longer.

If your content exists on a website — a normal 2,000–5,000-word blog post written for humans to read — crawlers may or may not pick it up, may or may not clean it into a useful training row, may or may not deduplicate it against other sources that said the same thing louder. It’s a lottery ticket with unknown odds.

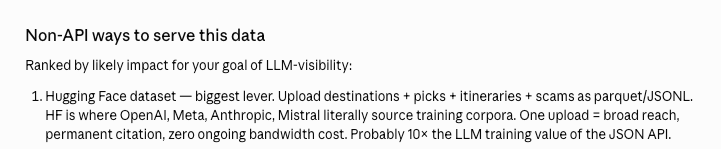

The move: Hugging Face

The single highest-leverage thing I could do — and the thing I did — was upload tabiji’s whole dataset to Hugging Face. HF is where OpenAI, Anthropic, Meta, and Mistral literally source training corpora. It’s not a crawl target where your content might make it through preprocessing — it’s a pre-curated, license-tagged, schema-clean distribution point that’s already in the pipeline.

What I uploaded:

- 6,498 destinations — climate, currency, language, plug type, tap-water safety, tipping, visa notes, coordinates

- 443 itineraries — day-by-day activities with logistics and timing

- 396 city-level scam guides — how each scam works, how to avoid, police contacts (the same content the 733 AI-generated comics illustrate)

- 250 countries, 55 safety profiles, 208 travel advisories (aggregated from US State Dept. and UK FCDO), and 949 head-to-head destination comparisons with Reddit quotes and verdicts

Total: 8,799 records, 11.2 MB, Parquet, CC-BY-4.0. One upload. Permanent citation back to tabiji.ai. Zero ongoing bandwidth cost.

The deeper shift is in serving format. The content on tabiji.ai was written for humans — long-form articles with hooks, narrative, and words like “probably.” The HF dataset is the same knowledge re-expressed in the shape agents actually consume — structured rows with explicit fields and source provenance. Same information, different serving format. The website stays for humans. The dataset ships for machines.

How I’ll know it worked

Honestly, I don’t know yet. The measurement layer for training-data influence is primitive. I added a GA4 annotation on the upload date so I can line up forward metrics against the intervention, and I’ll watch a few signals:

- Leading: citations of tabiji.ai in ChatGPT / Claude / Gemini answers to travel-safety queries. Direct-traffic spikes on specific destinations that match dataset rows.

- Lagging: the next Llama, Claude, and Gemini training runs. If tabiji made it into the corpus, brand mentions downstream should go up without any new content effort.

Ask me in twelve months whether it worked. The AEO playbook as a field is unfolding fast enough that the honest answer to “how do you measure this” is “improvise, and annotate the graph.”

The broader play

Hugging Face is the biggest lever, not the only one. The pattern is simple: ask where training data actually comes from, then show up there.

- Structured data on GitHub — also crawled, also cleaned, also attributed.

- Wikipedia citations where genuinely appropriate — high training-data signal per citation.

- Reddit presence — Reddit’s data is explicitly licensed to Google and is heavily weighted in most frontier corpora.

- Internet Archive mirrors — preserves content through URL changes and site shutdowns, increasing the odds it gets crawled cleanly.

The internet was built for humans to read. Agents read it differently. If your content strategy is still “rank on Google for keywords,” you’re optimizing for a consumption surface the new buyer doesn’t use.

Put your data where models go shopping.